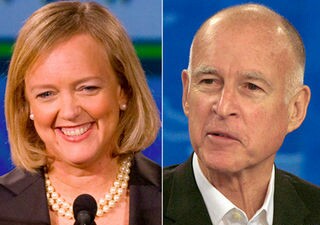

As the campaigns in California were nearing an end, I ran a story on the Field Poll showing both Jerry Brown and Barbara Boxer moving from close races to a big leads. I pay particular attention to the Field Poll because it has long been regarded as the best of the statewide polls.

As the campaigns in California were nearing an end, I ran a story on the Field Poll showing both Jerry Brown and Barbara Boxer moving from close races to a big leads. I pay particular attention to the Field Poll because it has long been regarded as the best of the statewide polls.

I spent a good amount of time in graduate school studying polling and following the debates over the proper way to poll. I recall in the middle part of the decade there was a long debate among pollsters about what factors should be weighed and what factors should be measured. In particular was the question of party indentification.

There are adherents to both sides of that view, but as one might guess, properly setting that number in one’s sample makes a huge difference in terms of the accuracy polls.

With the advent of the internet age, one thing a lot of political observers do is, rather than look at a single poll, look at the average across polls. That tends to reduce survey to survey noise and show the overall average. Another approach is simply forget the raw numbers and look for the trend in the individual poll. The logic there is the internal dynamics of a given poll are consistent and you can simply assess whether the candidate is doing better or worse by tracking one poll over time.

While in aggregate I prefer the average approach, it is worth noting that not all polls perform the same. Some polls are more accurate over time and some pollsters are more accurate one year than another.

It is worth noting in my article on the Field Poll that one of the conservative bloggers showed the Rasmussen Poll, which showed a much more modest 3 to 4 point lead for both Brown and Boxer. I already knew that the Rasmussen Poll is almost a Republican poll. It is contracted by Fox and it tends to be most favorable to Republicans. Rasmussen showing both candidates with a lead indicated that they indeed had a solid lead.

It turns out the data and results back up my hunch at the time.

On November 3, the LA Times blog did a piece on which pollsters called California’s top races correctly.

They found that LA Times/ USC Poll and the Field Poll were correct in projecting comfortable wins, and in which the Rasmussen poll did the worst.

Of interest was the fact that the Republican candidate attacked the Times/ USC Poll “saying incorrectly that Times polls always favored candidates the paper had endorsed.”

However, the paper got the last laugh as, “In the end, Brown won by 12 points and Boxer by nine. The poll that came closest to nailing the results: The L.A. Times/USC survey, which had projected a 13-point margin for Brown and an eight-point margin for Boxer. Field, which had projected margins of 10 points for Brown and eight for Boxer, came in a close second.”

Rasmussen, as I said, did the worst. “The worst record? The Rasmussen surveys, which were conducted for Fox News and Rasmussen’s own survey website [sic]. Those polls projected a Boxer margin of three points and a Brown win by four.”

One big factor is that the Field Poll and the Times/ USC Poll are now using both landlines and cellphones, whereas the Rasmussen is not. That means that Rasmussen is less likely to reach younger voters who tend to lean more Democratic.

In our household we have a landline, but we rarely answer it, using instead our cellphones as our primary phone and our landline is primarily a back-up and a fax line.

The other key difference is the determination of who is a likely voter. Writes the blog, “The Times/USC survey based its likely-voter model on questions about a person’s enthusiasm about voting this year, the respondent’s expressed certainty about voting and his or her voting history. Some Republican analysts said that the emphasis on past voter history was screening out Republicans who had not voted in 2006 and 2008 but who would show up this year. In the end, those hypothetical voters turned out to be something of a mirage. Exit polls this year showed an electorate that was quite similar to the group that voted in the 2006 midterm elections.”

All of this of course means that polling is an art, not completely a science, and they have to model what the expected voter universe is and get it correct. Flawed assumptions about the voting population will make for a flawed or skewed poll result.

It turns out nationally, that Rasmussen did quite poorly overall and observers ought to be cautious before citing the Rasmussen poll as evidence of anything more than a trend. Nate Silver, who is a polling guru and has his own website FiveThirtyEight.com wrote a blog in the NY Times on November 4 .

His research found, “On Tuesday, polls conducted by the firm Rasmussen Reports — which released more than 100 surveys in the final three weeks of the campaign, including some commissioned under a subsidiary on behalf of Fox News — badly missed the margin in many states, and also exhibited a considerable bias toward Republican candidates.”

He continues, “The 105 polls released in Senate and gubernatorial races by Rasmussen Reports and its subsidiary, Pulse Opinion Research, missed the final margin between the candidates by 5.8 points, a considerably higher figure than that achieved by most other pollsters. Some 13 of its polls missed by 10 or more points, including one in the Hawaii Senate race that missed the final margin between the candidates by 40 points, the largest error ever recorded in a general election in FiveThirtyEight’s database, which includes all polls conducted since 1998.”

Moreover, not only were they inaccurate, they were biased. Mr. Silver writes, “Rasmussen’s polls were quite biased, overestimating the standing of the Republican candidate by almost 4 points on average.”

He continues, “In just 12 cases, Rasmussen’s polls overestimated the margin for the Democrat by 3 or more points. But it did so for the Republican candidate in 55 cases — that is, in more than half of the polls that it issued.”

“If one focused solely on the final poll issued by Rasmussen Reports or Pulse Opinion Research in each state — rather than including all polls within the three-week interval — it would not have made much difference. Their average error would be 5.7 points rather than 5.8, and their average bias 3.8 points rather than 3.9,” writes Silver.

Mr. Silver found no difference between those labeled as Rasmussen versus those commissioned for Fox News or its subsidary. Instead he argued, “Both sets of surveys used an essentially identical methodology.” And found, “Polls branded as Rasmussen Reports missed by an average of 5.9 points and had a 3.9 point bias. The polls it commissioned on behalf of Fox News had a 5.1 point error, and a 3.6 point bias.”

Rasmussen polls were increasingly criticized during the election cycle.

“We have critiqued the firm for its cavalier attitude toward polling convention,” according to Mr. Silver. “Rasmussen, for instance, generally conducts all of its interviews during a single, 4-hour window; speaks with the first person it reaches on the phone rather than using a random selection process; does not call cellphones; does not call back respondents whom it misses initially; and uses a computer script rather than live interviewers to conduct its surveys. These are cost-saving measures which contribute to very low response rates and may lead to biased samples.”

This gets to the point I made earlier about the debate over assumptions of party indentification. Rasmussen anchors the assumptions, which could potentially distort the results if they overestimate the partisan breakdown of the electorate.

Writes Mr. Silver, “Rasmussen also weights their surveys based on preordained assumptions about the party identification of voters in each state, a relatively unusual practice that many polling firms consider dubious since party identification (unlike characteristics like age and gender) is often quite fluid.”

However, Rasmussen has not consistently been poor, only this year, though it seems they have always been more conservative in their predictions. “Rasmussen’s polls — after a poor debut in 2000 in which they picked the wrong winner in 7 key states in that year’s Presidential race — nevertheless had performed quite strongly in in 2004 and 2006. And they were about average in 2008. But their polls were poor this year.”

He continues, “The discrepancies between Rasmussen Reports polls and those issued by other companies were apparent from virtually the first day that Barack Obama took office. Rasmussen showed Barack Obama’s disapproval rating at 36 percent, for instance, just a week after his inauguration, at a point when no other pollster had that figure higher than 20 percent.”

“Rasmussen Reports has rarely provided substantive responses to criticisms about its methodology,” Mr. Silver reports. “At one point, Scott Rasmussen, president of the company, suggested that the differences it showed were due to its use of a likely voter model. A FiveThirtyEight analysis, however, revealed that its bias was at least as strong in polls conducted among all adults, before any model of voting likelihood had been applied.”

“Some of the criticisms have focused on the fact that Mr. Rasmussen is himself a conservative — the same direction in which his polls have generally leaned — although he identifies as an independent rather than Republican,” Mr. Silver writes. “In our view, that is somewhat beside the point. What matters, rather, is that the methodological shortcuts that the firm takes may now be causing it to pay a price in terms of the reliability of its polling.”

None of this means that in the next election cycle Rasmussen cannot fix some of the problems in their polling. My recommendation to people is to look at about a three-week window of polls, and see where the various polling companies rank in terms of margin for Democrats versus Republicans. If you see a polling company consistently at the bottom or top, one should use caution about looking at their polls.

I still think an average poll score is the best measure, as it tends to blur the individual methodological issues and also produce much less week-to-week noise. Short of that, pick a poll with a good reputation over time or in the middle in terms of the spread.

—David M. Greenwald reporting

Very interesting article David. I just discovered FiveThirtyEight.com a few weeks ago. Your description of Silver as a polling guru is very apt.

Take the Rasmussen poll, Field poll, average of all polls or whatever gauge you want to use, the fact is this election was a drubbing for the Democrats and now the Republicans control the most state legislatures they’ve ever had since 1928. It was landslide and a total refutation of Obama’s policies.

Where’s that story on here?

I don’t really cover national politics rusty as you may know. Occasionally a local topic will move onto that, but for the most part I cover Davis, Yolo County, and State Government. To the extent that I covered anything nationally it was only in relation to what happened in California. Thus I talked about the fact that it doesn’t appear the Tea Party came to California. But, this is not a national political blog. It’s a local blog. I cover California politics because we live ten to fifteen miles from the doors of the Capital and because it impacts local funding immensely.

It was landslide and a total refutation of Obama’s policies.

Where’s that story on here?

Why didn’t the Republicans capture the Senate? why didn’t they make any solid gains in California?

wdf1: “Why didn’t the Republicans capture the Senate? why didn’t they make any solid gains in California?”

The answer to your first question is the Republicans very nearly did win the Senate. Three Senate races were extremely close, and could not be reported immediately. They eventually tipped Democratic, but all were squeakers. As it stands now, I believe Democrats have only a 4 seat advantage over the Republicans. As to your second question, CA is out of step with the rest of the country, which is more conservative than CA. CA is leaning further and further left, and is going to topple over and self destruct if it isn’t careful. (I have my doubts about Jerry Brown, but I wish him every success.) Also, the Republicans put up a very poor candidate in Meg Whitman. Brown and Boxer also ran particularly vicious campaigns, which seems to be the order of the day for politics these days…

[quote]Three Senate races were extremely close, and could not be reported immediately. They eventually tipped Democratic, but all were squeakers.[/quote]

What is interesting is if you go back to 2006, control of the Senate and House were determined almost invariably by squeakers. In retrospect that should have served as a warning to Democrats, but Obama largely ignored the warning, pretended to be above the fray, and then failed to respond to a scurrilous series of charges.

For my coverage, California was a more interesting question as my focus is on local politics not national ones.

To dmg: What I think is too many politicians on both the right and left forget that most of the country is moderate. There will always be those who always vote Democratic, those who will always vote Republican. It is those in the center you have to woo if you want to win, and they can go either way depending on how the economy/foreign policy is doing. For either party to ignore that fact is to do so at their peril…

Three Senate races were extremely close, and could not be reported immediately. They eventually tipped Democratic, but all were squeakers.

Which Senate races do you have in mind?

“Why didn’t the Republicans capture the Senate? why didn’t they make any solid gains in California?”

wdf1 can you honestly and fairly say that the election wasn’t a butt whooping of the Democrats? I mean comeon, I’m sure even you can put aside your bias and admit that it was a landslide.

wdf1 can you honestly and fairly say that the election wasn’t a butt whooping of the Democrats? I mean comeon, I’m sure even you can put aside your bias and admit that it was a landslide.

I’m just asking the question. do you have an answer?

The fact that only 1/3 of the Senate was up for election is the only reason that the Republicans did not gain a strong majority this year. The next election cycle in 2012 may finish the job but 2 years is a long time from now.

wdf1: “Which Senate races do you have in mind?”

Alaska, Colorado and Washington.

Alaska, Colorado and Washington.

Lisa Murkowski ran as a write-in Republican candidate and appears to have a solid chance at winning. It will take a while to figure it all out. Even if Murkowski loses, the next highest vote getter was the official Republican on the ballot, Joe Miller. Alaska will probably stay Republican, unless Murkowski is disgusted enough by the experience to “go rogue”.

“I’m sure even you can put aside your bias and admit that it was a landslide.”

Over the two prior election cycles, the Republicans lost 54 seats in the House. They regained those, plus another half dozen. Of those 60 (or so), about two dozen were very weak Dem seats to begin with, so they’ll probably retain them. Another two dozen or so will be very hard for the Reps to retain in 2012. And maybe a dozen are genuinely competitive from year to year.

So I fully expect that Democrats will recapture 20 – 30 seats in 2012 without any difficulty. If the economy is good, Democrats could regain control of the House. If it isn’t, they will just reduce the current margin. Meanwhile, the House Republican leadership can accomplish very little over the next two years, except any issues they and the Senate minority decide to work with the President and the Democrats on. So cooperation and compromise could yield better results for both sides, while confrontation and obstruction will benefit nobody.

“Meanwhile, the House Republican leadership can accomplish very little over the next two years, except any issues they and the Senate minority decide to work with the President and the Democrats on.”

I’ve got an idea, how about the Democrats work with the Republican House majority?

Why? The Republicans didn’t work with the Democrats when they were in the majority. Why shouldn’t the Republican House Majority work with the Democratic Senate and President?

The Democrats didn’t work with the Republicans either when they were in majority of all the branches. It just cracks me up when the Democrats cry that the Republicans won’t work with them when we all know they play the same game.

I don’t expect anyone to work with anyone. I expect complete gridlock. Which is why I think it is illogical to complain about government not doing anything and at the same time intentionally elect a divided government.

Obama stated that “elections have consequences” and “the Republicans will have to sit in the back of the bus”. How’s that for working together?

Do you make it to ignore what I say and respond to something else?

I’ll repeat: I don’t expect anyone to work with anyone. I expect complete gridlock. Which is why I think it is illogical to complain about government not doing anything and at the same time intentionally elect a divided government.

It discourages me to hear Mitch McConnell say publicly and confidently that his top priority is to make Obama a 1-term president. I get it that these parties will always be in loyal opposition, and there’s a value to that. But for the good of the country, I would prefer hearing something like “helping the economy” as a top priority.

And Democrats have been more cooperative than Republicans in being bipartisan. Immediately I can think of No Child Left Behind, the Patriot Act, and the approval of Roberts and Alito as major instances where Dems crossed over more willingly.

The Republicans passed Sotomayor and Kagan. The Democrats blocked Harriet Myers, but I can’t think of any Obama appointees that have been blocked. The Democrat minority tried to block almost all of Bush’s Judicial Court nominees.

There have been many laws enacted under Democrat rule that the Republicans have backed and vice versa, so don’t try and spin that “Democrats have been more cooperative than Republicans in being bipartisan”, not if you’re fair anyway.

Can you be fair and unbiased?

AP, via Huffington Post:

“WASHINGTON — A determined Republican stall campaign in the Senate has sidetracked so many of the men and women nominated by President Barack Obama for judgeships that he has put fewer people on the bench than any president since Richard Nixon at a similar point in his first term 40 years ago.

The delaying tactics have proved so successful, despite the Democrats’ substantial Senate majority, that fewer than half of Obama’s nominees have been confirmed and 102 out of 854 judgeships are vacant.

Forty-seven of those vacancies have been labeled emergencies by the judiciary because of heavy caseloads.”

wdf1: “It discourages me to hear Mitch McConnell say publicly and confidently that his top priority is to make Obama a 1-term president. I get it that these parties will always be in loyal opposition, and there’s a value to that. But for the good of the country, I would prefer hearing something like “helping the economy” as a top priority.”

I couldn’t agree w you more. This sort of rhetoric is unseemly, even if the other side engages in it too. It takes two to fight and two to cooperate.

dmg: “I’ll repeat: I don’t expect anyone to work with anyone. I expect complete gridlock. Which is why I think it is illogical to complain about government not doing anything and at the same time intentionally elect a divided government.”

So what are you saying here, the electorate should have put in more Democrats, so the gov’t would not be so divided, even if the electorate does not agree with what Obama is doing? At least this way Obama cannot do any more damage hopefully…

The Democrats blocked Harriet Myers,

Harriett Meirs withdrew her nomination as much because of concerns and opposition from prominent Republicans — Limbaugh, George Will, William Kristol, Robert Bork — as because of predictable skepticism from Democrats. In reports from both Republicans and Democrats, she did terribly in mock confirmation preps and interviews with senators; in other words, she probably wouldn’t have performed well in her confirmation hearings.

The Democrat minority tried to block almost all of Bush’s Judicial Court nominees.

Here is a list of judicial appointments by president:

[url]http://en.wikipedia.org/wiki/List_of_Presidents_of_the_United_States_by_judicial_appointments[/url]

To be fair we have to see what happens by the end of a presidency to judge, but so far, Obama isn’t on track to have as many judicial appointments approved, even for a one-term president.

“Obama isn’t on track to have as many judicial appointments approved, even for a one-term president.”

Let’s hope so.

There have been many laws enacted under Democrat rule that the Republicans have backed and vice versa, so don’t try and spin that “Democrats have been more cooperative than Republicans in being bipartisan”, not if you’re fair anyway.

Then can you name any major pieces of legislation since January 2009 that had solid bi-partisan support?

He continues, “In just 12 cases, Rasmussen’s polls overestimated the margin for the Democrat by 3 or more points. But it did so for the Republican candidate in 55 cases — that is, in more than half of the polls that it issued.”

Rasmussen has for years tended to slant towards the GOP even when that slant’s not supported by data–but interestingly you see the Rasmussen polls pop up on “liberal” sites such as TPM. Weren’t some of the pollsters saying Boxer and Fiorina were in a tough battle, etc? BS. It’s like a marketing spin.

That said, the CA Dems’ victories don’t mean a great shift to the left. Brown’s a fiscal conservative, for the most part. Boxer may be a bit more liberal but still pro-business (not to say a union darling). Nor do the TP victories signal a great rightist movement (perhaps in some rural areas…). The TP/right/GOP may be over-ebullient, while sentimental leftists may be overreacting–as with Davis’s own Byronius Belchamy of “New Worlds,” who has sounded the hysteria-crat alarm: [url] http://new-worlds.org/blog/?p=8217%5B/url%5D

F**k those nasty TPsters! B-ron at least plagiarizes a liberal who can write somewhat coherently. At the same time, CA Dems have 13-14% more registered voters than CA GOP (or TP). The rise of the nutcase right may be a problem in fly-over zones–not in CA too much (and the nutcase…left is not entirely without flaws)

An interesting statistical analysis of the recent election:

[url]http://fivethirtyeight.blogs.nytimes.com/2010/11/08/2010-an-aligning-election/[/url]